Have you ever asked an AI tool a simple question and received a confusing, incomplete, or completely wrong answer? You’re not alone. As powerful as large language models (LLMs) have become, the quality of their output depends heavily on how you communicate with them. The difference between a vague prompt and a well-structured one can be the difference between generic responses and highly accurate, business-ready insights.

This is where prompt engineering for LLMs becomes critical.

In 2026, businesses are increasingly relying on AI for tasks like:

-

Content generation

-

Customer support automation

-

Code generation

-

Data analysis and reporting

-

Workflow automation

But here’s the challenge: LLMs don’t “think” like humans; they respond based on patterns in data. Without clear instructions, they can hallucinate, misinterpret intent, or produce inconsistent results.

That’s why mastering prompt engineering for LLMs is no longer optional—it’s a core skill for developers, marketers, and businesses using AI at scale.

A well-crafted prompt can help you:

-

Get accurate and consistent outputs

-

Reduce errors and AI hallucinations

-

Save time on revisions and manual corrections

-

Improve automation workflows

-

Generate high-quality, context-aware responses

In this guide, we’ll break down best practices for prompt engineering for LLMs, helping you create reliable AI outputs across different use cases.

We’ll cover:

-

What prompt engineering really means

-

Key techniques to improve AI responses

-

Common mistakes to avoid

-

Real-world use cases

-

Advanced strategies for consistent outputs

By the end, you’ll know how to design prompts that turn AI into a reliable, high-performance tool instead of an unpredictable one.

What is Prompt Engineering for LLMs?

At its core, prompt engineering for LLMs is the process of designing and structuring inputs in a way that guides large language models to produce accurate, relevant, and consistent outputs.

Unlike traditional programming, where you write explicit instructions, working with LLMs requires you to communicate intent clearly through prompts. This makes prompt engineering for LLMs a unique blend of logic, language, and experimentation.

A simple prompt like:

“Write about AI”

may produce a generic response.

But a well-engineered prompt like:

“Write a 300-word blog introduction about AI in healthcare, focusing on benefits, challenges, and real-world applications in 2026”

will generate far more precise and useful output.

This demonstrates why prompt engineering for LLMs is essential for anyone using AI tools professionally.

Why Prompt Engineering is Important

The effectiveness of AI outputs depends heavily on the structure of prompts. Poor prompts lead to:

-

Vague or irrelevant responses

-

Inconsistent tone or structure

-

Hallucinated or incorrect information

On the other hand, strong prompt engineering for LLMs helps:

-

Improve accuracy and reliability

-

Reduce AI hallucinations

-

Ensure consistent outputs across tasks

-

Save time on editing and corrections

Growing Importance of Prompt Engineering

As AI adoption grows, so does the need for better prompt design.

According to MarketsandMarkets, the AI market is expected to grow from $150 billion in 2023 to over $1.3 trillion by 2030, driven by widespread enterprise adoption of AI tools.

This rapid growth highlights why mastering prompt engineering for LLMs is becoming a critical skill for developers, marketers, and businesses.

How Prompt Engineering Works

To understand prompt engineering for LLMs, it’s important to know how LLMs respond:

-

They predict the next word based on context

-

They rely on patterns from training data

-

They do not truly “understand” meaning

This means your prompt must provide:

-

Clear instructions

-

Context and constraints

-

Expected format or output style

The more structured your input, the better the output.

Simple Example

Basic Prompt:

“Explain cloud computing.”

Engineered Prompt:

“Explain cloud computing in 150 words for beginners, including benefits, types, and real-world examples in simple language.”

The second version reflects strong prompt engineering for LLMs, leading to more reliable AI outputs.

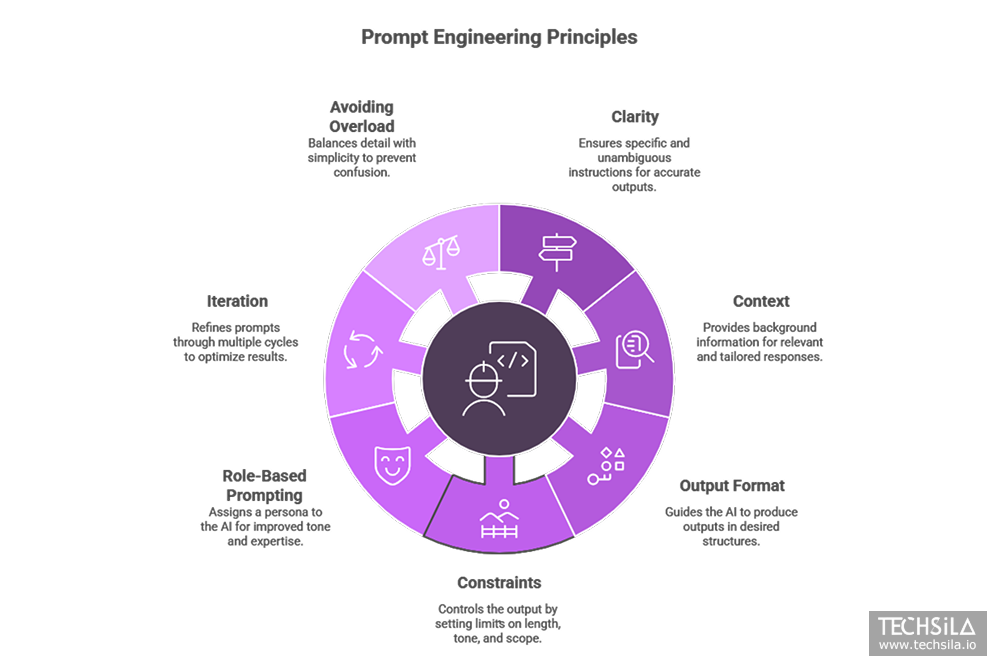

Key Principles of Prompt Engineering for Reliable AI Outputs

To master prompt engineering for LLMs, you need more than just basic instructions—you need a structured approach that ensures consistent, accurate, and high-quality outputs.

In this section, we’ll break down the core principles of prompt engineering for LLMs that professionals use to generate reliable AI outputs.

1. Be Clear and Specific

Clarity is the foundation of effective prompt engineering for LLMs.

Vague prompts lead to vague answers. The more specific your instructions, the better the output.

Example:

Poor Prompt:

“Write about marketing.”

Optimized Prompt:

“Write a 500-word blog about digital marketing strategies for SaaS startups in 2026, including examples and actionable tips.”

Clear prompts reduce ambiguity and improve AI output reliability.

2. Provide Context

LLMs perform better when they understand the context behind the request. Strong prompt engineering for LLMs always includes relevant background information.

Example:

Without Context:

“Write a product description.”

With Context:

“Write a product description for a SaaS AI tool targeting startup founders, focusing on automation, cost savings, and scalability.”

Context helps the AI generate more relevant and tailored responses.

3. Define Output Format

One of the most powerful techniques in prompt engineering for LLMs is specifying the output structure.

You can guide the AI to produce:

-

Bullet points

-

Step-by-step guides

-

Tables

-

Blog sections

-

JSON or code formats

Example:

“Provide the answer in bullet points with short explanations.”

This ensures consistency across outputs, especially in automation workflows.

4. Use Constraints and Boundaries

Constraints help control the output and reduce errors.

Effective prompt engineering for LLMs includes limits like:

-

Word count

-

Tone (professional, casual, technical)

-

Audience (beginners, developers, enterprises)

-

Scope of information

Example:

“Explain blockchain in under 200 words for beginners without technical jargon.”

This prevents overly complex or irrelevant responses.

5. Use Role-Based Prompting

Assigning a role improves output quality significantly.

This is one of the most effective strategies in prompt engineering for LLMs.

Examples:

-

“Act as a senior marketing strategist…”

-

“Act as a SaaS product manager…”

-

“Act as a technical AI expert…”

Role-based prompts guide the model’s tone, depth, and expertise level.

6. Iterate and Refine Prompts

Prompt engineering is not a one-time task; it’s an iterative process.

Even experts refine prompts multiple times to achieve the best results.

Steps:

-

Start with a basic prompt

-

Analyze the output

-

Add clarity, context, or constraints

-

Repeat until output is optimized

This iterative approach is essential in prompt engineering for LLMs to ensure reliable AI outputs.

7. Avoid Overloading the Prompt

While detail is important, too much information can confuse the model.

Balance is key in prompt engineering for LLMs:

-

Provide enough context

-

Avoid unnecessary complexity

-

Keep instructions structured

Quick Summary of Best Practices

-

Be clear and specific

-

Add context and intent

-

Define output format

-

Use constraints and roles

-

Iterate and refine prompts

These principles form the foundation of prompt engineering for LLMs, helping you consistently generate accurate and reliable AI outputs

Advanced Prompt Engineering Techniques for LLMs

Once you understand the basics, the next step in prompt engineering for LLMs is using advanced techniques that significantly improve the accuracy, consistency, and reliability of AI outputs.

These techniques are widely used by developers, AI engineers, and businesses to get high-quality, production-ready results.

1. Few-Shot Prompting

Few-shot prompting involves giving the AI examples within the prompt so it understands the expected pattern.

This is one of the most powerful methods in prompt engineering for LLMs.

Example:

“Convert the following sentences into a formal tone:

-

I can’t attend → I regret to inform you that I am unable to attend

-

Let’s fix this → We should resolve this issue

Now convert: ‘We need to talk’”

This technique improves consistency and formatting accuracy.

2. Chain-of-Thought Prompting

Chain-of-thought prompting guides the model to think step by step before answering.

It is especially useful for:

-

Problem-solving

-

Calculations

-

Logical reasoning tasks

Example:

“Explain step by step how to calculate ROI for a marketing campaign.”

Using this approach in prompt engineering for LLMs reduces errors and improves logical accuracy.

3. Role-Based System Prompts

Advanced prompt engineering for LLMs often starts with assigning a system-level role.

This sets the behavior of the AI before any task is given.

Example:

“You are a senior AI engineer with 10 years of experience. Provide detailed and technical answers.”

This ensures outputs are:

-

More expert-level

-

More structured

-

More reliable

4. Prompt Templates

Instead of writing prompts from scratch every time, professionals create reusable templates.

This is essential for scaling prompt engineering for LLMs in business workflows.

Example Template:

“Act as a [ROLE]. Create a [TYPE OF CONTENT] for [AUDIENCE] about [TOPIC]. Keep it [TONE] and include [SPECIFIC REQUIREMENTS].”

Templates ensure:

-

Consistency across outputs

-

Faster content generation

-

Easier automation

5. Instruction + Constraint Layering

Layering instructions is an advanced technique in prompt engineering for LLMs, where you combine:

-

Role

-

Task

-

Context

-

Constraints

-

Output format

Example:

“Act as a SaaS marketing expert. Write a 300-word LinkedIn post for startup founders about AI automation. Use a professional tone, include 3 bullet points, and end with a CTA.”

This produces highly structured and usable outputs.

6. Output Validation Prompts

To improve reliability, you can ask the AI to verify its own output.

Example:

“Review the above answer and correct any factual errors or inconsistencies.”

This technique enhances accuracy and trustworthiness in prompt engineering for LLMs.

7. Multi-Step Prompting

Instead of asking everything in one prompt, break tasks into steps:

-

Generate outline

-

Expand sections

-

Refine tone and structure

This approach is widely used in prompt engineering for LLMs to produce high-quality long-form content.

Quick Recap of Advanced Techniques

-

Few-shot prompting for pattern learning

-

Chain-of-thought for logical accuracy

-

Role-based prompts for expertise

-

Templates for scalability

-

Layered instructions for precision

-

Output validation for reliability

-

Multi-step prompting for complex tasks

Mastering these techniques takes your prompt engineering for LLMs skills from basic to advanced, helping you generate reliable AI outputs at scale.

Common Prompt Engineering Mistakes to Avoid

Even experienced users can make mistakes in prompt engineering for LLMs, leading to inconsistent, inaccurate, or low-quality AI outputs. Let’s dive deeper into these mistakes and how to avoid them.

1. Using Vague or Generic Prompts

One of the most common mistakes in prompt engineering for LLMs is a lack of specificity. Generic prompts often produce outputs that are too broad, off-topic, or unstructured.

Examples:

Poor Prompt:

“Write about AI.”

Optimized Prompt:

“Write a 300-word blog introduction about AI in healthcare in 2026, focusing on benefits, challenges, and real-world applications.”

Tip: Always include the target audience, context, and scope in your prompt to maximize reliability.

2. Not Providing Enough Context

LLMs cannot “infer” your intent like a human. Missing context often results in irrelevant or incomplete responses.

Prompt:

“Create a marketing strategy.”

Optimized Prompt:

“Create a digital marketing strategy for a B2B SaaS startup targeting small businesses, focusing on lead generation and content marketing in 2026.”

Tip: Include background info, audience details, and objectives for consistent outputs.

3. Ignoring Output Format

Failing to define output structure often produces messy or unorganized AI content. In prompt engineering for LLMs, specifying output format is critical.

Examples of output formats:

-

Bullet points

-

Step-by-step guides

-

JSON or code snippets

-

Tables for comparison

Tip: Always define format, tone, and style in your prompt.

4. Overloading the Prompt

Too much information in a single prompt can confuse the LLM. This reduces output quality and makes debugging harder.

Tip: Break complex tasks into smaller sub-prompts and combine outputs later. This technique is especially effective in prompt engineering for LLMs when producing long-form content or multi-step analyses.

5. Not Iterating or Refining Prompts

Many users stop after one attempt, expecting perfect results. However, prompt engineering for LLMs is iterative.

Best Practice:

-

Draft initial prompt

-

Evaluate output quality

-

Refine wording, context, or constraints

-

Repeat until output meets requirements

Tip: Keep a library of refined prompts for recurring tasks.

6. Lack of Constraints

Without constraints, AI might generate overly long, irrelevant, or inconsistent responses.

Examples of constraints:

-

Word count: “Write under 200 words.”

-

Tone: professional, casual, technical

-

Audience: beginner, developer, executive

-

Scope: focus on key insights only

Tip: Explicitly add constraints in your prompt to guide reliable outputs.

7. Ignoring AI Limitations

LLMs may hallucinate facts, miss context, or provide outdated information. Blind trust is a common mistake in prompt engineering for LLMs.

Tip: Always cross-check outputs, and consider using self-verification prompts:

“Review the above response and correct any errors or inconsistencies.”

Summary of Mistakes

-

Vague prompts → generic outputs

-

Missing context → irrelevant results

-

No format → messy responses

-

Overloading prompts → confusion

-

No iteration → inconsistent outputs

-

Missing constraints → unreliable AI behavior

-

Ignoring limitations → factual errors

Tip: Avoiding these mistakes is essential for reliable, prompt engineering for LLMs and consistent AI productivity.

Real-World Use Cases of Prompt Engineering for LLMs

Prompt engineering is not just theoretical; it is a critical skill with practical applications across industries. When done correctly, it transforms how businesses leverage AI, making processes faster, more reliable, and cost-effective.

Content Creation and Marketing

Effective prompt engineering for LLMs allows teams to produce high-quality content at scale. AI can generate blog posts, social media updates, email campaigns, and product descriptions, while maintaining tone and style consistency.

For example:

“Act as a SaaS marketing strategist. Write a 500-word LinkedIn post about AI automation for startup founders. Include three actionable bullet points and a call-to-action.”

Businesses applying these principles have reported 30–50% faster content creation and improved audience engagement.

For enterprise-ready AI content automation, explore Techsila’s LLM Development Services to implement professional prompt-engineered workflows.

Code Generation and Software Development

Prompt engineering for LLMs is transforming software development. Developers can generate:

-

Code snippets in multiple programming languages

-

Unit tests and documentation

-

Backend automation scripts

Example prompt:

“Write a Python function to fetch user data from a PostgreSQL database, handling exceptions and including detailed comments.”

For AI-assisted development at scale, check out Techsila’s Backend Development Services for professional integration of LLMs into your workflows.

Customer Support Automation

LLMs, when prompted correctly, can handle multi-turn conversations and deliver personalized customer support.

Example:

“Act as a customer support agent. Respond to a client asking about SaaS subscription cancellation, including refund policy and next steps.”

Organizations using this approach have reduced response times by up to 60%, improving both efficiency and customer satisfaction.

Business Intelligence and Data Analysis

With proper prompt engineering for LLMs, AI can summarize reports, extract insights, and provide actionable recommendations.

Example prompt:

“Analyze Q1 2026 sales data and provide the top five insights with suggested growth opportunities.”

Paired with automated pipelines, this enables fast, reliable decision-making and reduces manual analysis time.

AI-Powered SaaS Applications

Prompt-engineered LLMs can be integrated into SaaS platforms to provide chatbots, virtual assistants, and AI analytics tools. Using tools like LangChain enables developers to build reusable prompt templates and scalable AI workflows for real-world applications.

Example template:

“Act as a SaaS product expert. Suggest three AI-powered features for a productivity tool targeting startups, including pros, cons, and implementation steps.”

Implement enterprise-grade AI solutions with Techsila Backend Development Services to automate tasks and deliver reliable AI outputs.

Benefits Across Industries

-

Improved accuracy: Clear, structured prompts reduce AI errors

-

Faster output: Automates tasks traditionally done manually

-

Consistency: Standardized templates produce uniform results

-

Scalability: Reusable prompts work across teams and platforms

-

Cost-efficiency: Reduces manual labor and accelerates delivery

Tools and Platforms for Effective Prompt Engineering

Mastering prompt engineering for LLMs is not just about knowing best practices; it’s about using the right tools and platforms to test, iterate, and deploy prompts effectively. The combination of strong prompts and robust platforms allows businesses to scale AI workflows, maintain output quality, and integrate AI into real-world applications.

OpenAI Playground and API

The OpenAI Playground is one of the most widely used platforms for testing and refining prompts. It allows users to:

-

Experiment with different prompt formulations in real-time

-

Control temperature, max tokens, and response styles

-

Test outputs across multiple LLM models

The OpenAI API further extends these capabilities by allowing prompt-engineered workflows to be integrated directly into applications. This enables businesses to automate tasks such as:

-

AI content generation for blogs, emails, and social media

-

Customer support automation with multi-turn conversations

-

AI-assisted coding and backend workflows

Tip: Start by experimenting with small prompts in the Playground, then scale via the API. This helps identify high-performing prompts before full deployment.

LangChain for Template-Based Prompt Engineering

LangChain is a framework designed to help developers build scalable LLM applications with reusable prompt templates. It is particularly useful for:

-

Automating multi-step workflows

-

Creating structured outputs for business tasks

-

Combining prompts with data sources for context-aware responses

Example:

A SaaS company can create a LangChain template to generate weekly performance reports, ensuring consistent tone, format, and accuracy every time.

By combining LangChain with prompt engineering for LLMs, teams can maintain reliable outputs across multiple use cases, from marketing campaigns to backend automation.

Prompt Testing and Analytics Tools

Enterprise-grade prompt management requires tracking, testing, and refining prompts over time. Several tools help achieve this:

-

PromptLayer: Tracks prompts, logs outputs, and provides analytics for iterative improvement

-

FlowGPT: Offers community-driven templates for testing ideas and learning best practices

-

LlamaIndex: Connects LLMs to structured datasets, documents, and external knowledge for accurate outputs

These tools are critical for prompt engineering for LLMs in professional environments, enabling teams to:

-

Measure output quality

-

Compare prompt variations

-

Continuously improve AI reliability

Integration Platforms for Enterprise AI Workflows

To maximize the impact of prompt engineering for LLMs, integration into backend and SaaS platforms is essential. Integration allows:

-

Automation of repetitive tasks (e.g., report generation, content creation)

-

Real-time analysis and insights from structured and unstructured data

-

Embedding AI capabilities directly into applications

Example: A SaaS analytics platform can integrate prompt-engineered LLMs to generate automated insights for clients, complete with summaries, recommendations, and actionable KPIs.

Best Practices When Using Tools for Prompt Engineering

Even with the best platforms, prompt engineering for LLMs requires discipline and strategy:

-

Start Small: Begin with limited prompts and scale once successful

-

Iterate Continuously: Test, refine, and optimize prompts regularly

-

Use Role-Based and Few-Shot Prompts: Guide the AI’s behavior for complex tasks

-

Maintain a Prompt Library: Reuse tested prompts to save time and ensure consistency

-

Validate Outputs: Regularly check for errors or hallucinations to maintain reliability

Enterprise Tip: Combine multiple tools for the best results. For example, use OpenAI API for testing, LangChain for workflow templates, and PromptLayer for analytics. This ecosystem ensures high-quality outputs at scale.

Advantages of Using Platforms for Prompt Engineering

Using these tools and platforms in conjunction with prompt engineering for LLMs provides tangible benefits:

-

Consistency: Standardized templates produce uniform outputs across teams

-

Efficiency: Reduces manual workload, saving time and costs

-

Scalability: Prompts can be reused across multiple AI projects

-

Accuracy: Iteration and analytics improve reliability

-

Enterprise Integration: Seamlessly connects LLMs to SaaS, backend, and automation workflows

By leveraging these tools, businesses can turn prompt engineering for LLMs from a manual task into a systematic, repeatable, and reliable process, making AI a core operational asset.

Conclusion

Mastering prompt engineering for LLMs is no longer optional—it’s a critical skill for anyone leveraging AI. The effectiveness of large language models depends directly on the quality of prompts. With well-crafted prompts, LLM prompt engineering enables businesses to generate outputs that are accurate, consistent, and actionable.

From content creation and marketing automation to backend development and business intelligence, prompt engineering for LLMs ensures your AI is reliable, efficient, and scalable. Poorly engineered prompts, on the other hand, can lead to vague, irrelevant, or inconsistent outputs, limiting the value of your AI investments.

By following the best practices of prompt engineering for LLMs, including clarity, context, constraints, role-based prompts, iteration, and advanced techniques, you can transform LLMs from experimental tools into high-performing AI systems that drive measurable results. Whether your goal is to automate content workflows, enhance customer support, analyze business data, or integrate AI into SaaS applications, mastering prompt engineering for LLMs is the key to unlocking AI’s full potential. Ready to leverage prompt engineering for LLMs in your organization? Partner with experts who can help you design reliable, scalable, and enterprise-ready AI solutions. Get started today and request a quote from Techsila.

With professional guidance in prompt engineering for LLMs, you can:

-

Optimize AI outputs for accuracy and relevance

-

Integrate LLMs seamlessly into your backend and SaaS platforms

-

Scale AI solutions across teams and workflows

-

Save time and resources while improving efficiency

The future of AI doesn’t depend solely on the technology; it depends on how well you interact with it. Mastering prompt engineering for LLMs ensures your AI outputs are reliable, actionable, and aligned with business goals, giving your organization a competitive edge in 2026 and beyond. By focusing on prompt engineering for LLMs, you’re not just using AI; you’re harnessing its full potential for strategic impact.

FAQs

Q1: What is prompt engineering for LLMs?

Answer: Prompt engineering for LLMs is the practice of crafting clear, structured, and context-aware instructions that guide large language models to generate accurate and reliable outputs. It ensures the AI delivers consistent and actionable results.

Q2: Why is prompt engineering for LLMs important?

Answer: It is important because the quality of AI outputs depends on the prompts. Effective prompt engineering reduces errors, hallucinations, and irrelevant content, making AI outputs more reliable and usable for business or technical tasks.

Q3: Can prompt engineering for LLMs improve content creation?

Answer: Yes! By using well-designed prompts, businesses can generate high-quality blogs, social media posts, email campaigns, and product descriptions efficiently while maintaining tone and consistency.

Q4: What are common mistakes in prompt engineering for LLMs?

Answer: Common mistakes include vague prompts, missing context, ignoring output format, overloading instructions, not iterating prompts, and ignoring AI limitations. Avoiding these ensures accurate and consistent AI outputs.

Q5: Which tools help with prompt engineering for LLMs?

Answer: Tools like OpenAI Playground, LangChain, PromptLayer, and LlamaIndex help test, manage, and refine prompts. These platforms enable businesses to scale prompt engineering for LLMs and achieve reliable, high-quality outputs.